PURPOSE

I continue to receive countless questions from various walks of Bajan society about the Trident ID card and the national digital ID program. This is stark evidence that the Government of Barbados HAS NOT done an adequate and effective job of alleviating the concerns of the public. As such, I wanted to clarify once and for all the pros and cons of digital ID systems, and answer the million dollar question I am repeatedly asked, “Should I get the Trident ID card?”

INTRODUCTION

Digital identity (ID) has become the topic of the moment in Barbados, given the government’s poor implementation, failure to address the fears and anxieties of the public, and generally ineffectual communication to the average person on the street as to why they need digital ID and what value it will bring to their lives. The government has set out to provide a single digital identity to all residents/citizens through the collection, storage, and use of their biographic data (e.g., name, address, date of birth, gender, national registration number, etc.) and possibly their biometrics (e.g., fingerprints, iris scans, facial scans, etc.) as the primary means of establishing and verifying their identity. They will achieve this through a legally mandated, centralised national digital ID system.

Governments, international organizations, and multilateral banks (e.g., International Monetary Fund, World Bank, etc.) argue that digital ID systems provide benefits such as more effective and efficient delivery of government services; poverty reduction and welfare programs; financial inclusion through better access to banking and other products/services; minimise corruption; and preservation of national security interests. Multilateral banks are providing significant funding to developing countries to implement digital ID. In some cases, they’re even making the implementation of digital ID systems a ‘condition’ of loan agreements.

Critics maintain that digital ID systems may actually not guarantee more effective access to social and economic benefits, enhance service delivery, or improve governance, while at the same time, they raise serious issues, including worries about how they are developed and managed; social exclusion and discrimination; privacy and data protection; cybersecurity; and major risks for human rights. With regards to human rights, they threaten the right to privacy, freedom of movement, freedom of expression, and other protected rights. Additionally, since they usually involve the creation and maintenance of centralised databases of sensitive personal data, they are also prone to breaches by hackers or abuse/misuse by government institutions. These issues may lead to digital IDs becoming widespread tools for identification, surveillance, persecution, discrimination, and control, especially where identities are linked to biometrics and made mandatory.

For a more detailed explanation of both sides of the debate, please see below the PROS and CONS related to digital ID systems.

PROS

Easier access to services: digital ID systems can enable more efficient digital transformation across the local economy and increase Barbados’ participation in the global digital economy, especially given that many transactions – local and international – require personal identification. With Barbadians presented with less obstacles to prove their identity, commercial activities (including e-commerce) and government services (including e-government) become more accessible and effective.

Faster and cheaper transactions: the use of digital ID can allow for reductions in costs and response times, resulting in speedier execution, less red tape, and the availability of more responsive and relevant services. The quickness and trust with which a person’s identification can be verified allows for cheaper and more efficient interactions for all involved.

Fraud reduction: digital ID systems can offer several benefits in terms of online security, thus reducing the occurrence of online scams, fraud, and personal data breaches. A number of countries that have implemented digital ID have experienced significant decreases in fraud, saving them tens and even hundreds of millions of dollars.

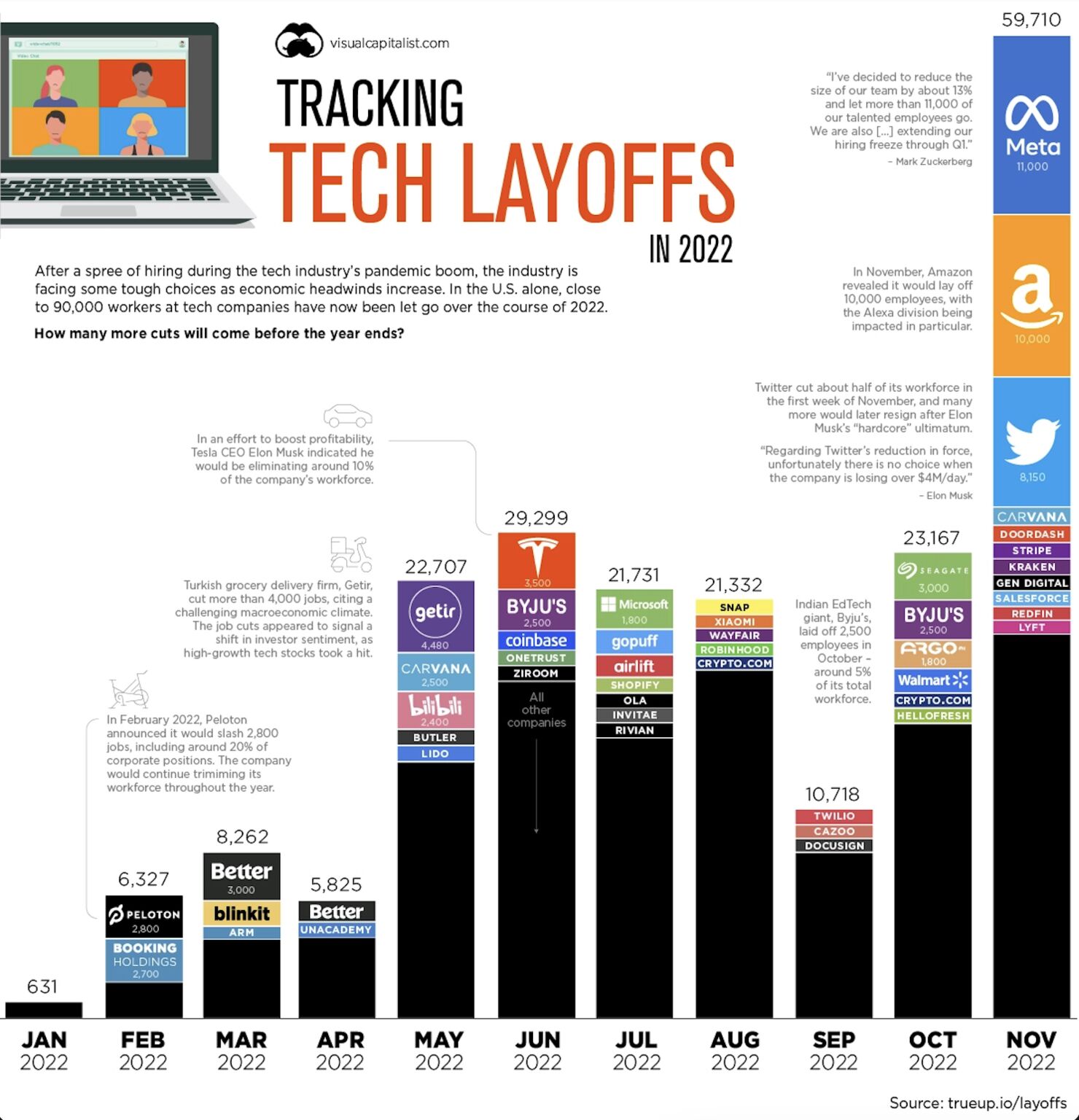

The graphic below outlines several ways in which digital ID can be used based on the roles played by organizations and individuals (Source: McKinsey).

The four (4) main areas of direct economic value for individuals have been identified as increased access to financial services, improved employment opportunities, greater agricultural productivity, and time savings. The five (5) highest sources of value for institutions – both the private and public sectors – are cost savings, fraud prevention, increased revenues from goods and services, improved employee productivity, and higher tax revenues.

CONS

Privacy and security: digital ID systems process billions of data points of our private information, regularly without our consent or knowledge. This information can include biographic details (NGN, date of birth, gender), biometrics (facial recognition, iris scans, fingerprints), banking and transactional data, and location-based info when digital ID is used for example in public transportation (the government has expressed plans to use the Trident ID for cashless payments on buses). The centralisation of so much data, excessive sharing of personal data without user consent, inability to control your personal data, exposure to cyber attacks and data breaches, and in worst case scenarios – mass surveillance by corporations and governments – are all issues which show the potential negative impact of digital ID.

Discrimination, biases and exclusion: the Barbados Digital Identity Act has a number of clauses which generate concerns about discrimination and exclusion. The Act states in several places that the digital ID will be required for persons to be added to the register of voters, to vote in elections, to access public and private services, and to obtain a driver’s license. There are no provisions in the Act for mandatory accessibility features in the digital ID and related services. As such, persons with disabilities may be excluded (e.g., the Trident ID website currently DOES NOT have several accessibility features for the disabled). Digital ID technologies are also at the end of the day developed by humans, and through poorly designed algorithms and data analytics, can reinforce their biases. Discrimination against key communities such as immigrants, LGBTQ+, homeless, and the disabled, among others have been highlighted in many digital ID related studies globally.

Technical errors: unintended consequences can occur that lead to restricted access to critical services (e.g., failures in authentication at points of service with no redundancy; websites that aren’t user friendly or stable; duplicate or inaccurate records; inability to add essential information; or the lack of reliable technical support, etc.). The government must fully consider availability risks and identify user-centric and privacy-enabling solutions to mitigate them. In African and Asian countries, numerous instances of technical errors were uncovered which presented citizens with major challenges.

Deployment challenges: five key problems exist, which are the lack of funding to maintain secure cyber systems and to hire or retain critical human resources to administer them; unequal access to mobile Internet and smartphones – the technology with the most potential to drive the uptake of digital ID; dependency on a specific technology or vendor; low trust in government; and the difficulty of rolling out in rural areas.

SHOULD YOU GET THE TRIDENT ID CARD?

As I have stated before, my concern is not particularly with the Trident ID card. The card is only one small piece of the overall digital ID ecosystem. My biggest concerns are as follows:

Poor legislation underpinning the digital ID system: Digital ID must be supported by a legal and regulatory framework that supports trust in the system, prevents abuse such as warrantless and disproportionate surveillance, guarantees data privacy and security, prevents discrimination, and maintains provider (government and corporations) accountability. This includes laws for digital ID management along with laws and regulations for e-government, privacy and data protection, computer misuse, data sovereignty/localisation, electronic transactions, limited-purpose ID systems, accreditation of participants, and freedom of information, among others. Unfortunately, a number of these laws are not available in Barbados at this time, and where they are, the language is problematic, enforcement is deeply lacking, or the legislation is outdated.

Government’s atrocious record in terms of protecting IT systems and the personal data privacy of individuals: The Government of Barbados DOES NOT have the resources (people, processes, or technologies) to secure complex IT systems and provide consistent privacy-enabling solutions. If they did, there would not be so many successful cyber-attacks and data breaches of government online systems in recent years (e.g., Queen Elizabeth Hospital, Ministry of Information and Smart Technology, Immigration Department, Barbados Police Service, and many others). Until government invests significantly in building their capacity in these areas, their IT systems and the personal data of Barbadians will be AT RISK.

The communication (or lack of) by government addressing the public angst around their digital ID program: Government has not effectively articulated the benefits of digital ID, its value to the average person on the street (in real and meaningful terms), its potential disadvantages and risks, what they are doing to manage these risks, and what Barbadians can do to protect themselves. Instead they have chosen to evade questions, avoid public discussion with experts involved, and turn their resources towards attacking private citizens who are expressing concerns.

In 2018, I conducted a European Union (EU) cybersecurity assessment for the the Government of Barbados. In the report, I clearly stated:

Trust in the Internet and in the use of online services is critical to developing a thriving local Internet economy and to participating widely in the global digital economy. Low trust in the Internet, e-government services, and e-commerce services hampers the government, businesses and consumers from fully taking advantage of all the economic benefits the Internet has to offer. Given the high fixed broadband and mobile data penetration rates in Barbados, this is especially concerning.

European Union Consultancy to Develop a Government Cybersecurity Assessment and Strategic Roadmap – Cybersecurity Assessment Report (Authored by Niel Harper)

From 2018 to this present day, the government has failed to address the low levels of trust or their lack of expertise in delivering secure and privacy respecting IT solutions, all of which are undoubtedly preventing them from delivering their digital transformation and modernisation agenda (including the implementation of the digital ID).

Ultimately, Barbadians need to decide for themselves if the value of obtaining the Trident ID outweighs the associated risks. I cannot make this decision for anyone. All I can do is educate and build awareness, and try to put some pressure on the government to be more accountable and take greater responsibility for protecting citizens from the negative effects of digital ID, mass personal data processing, cyber attacks and data breaches, human rights violations, online fraud, and other harms resulting from widespread government use of information and communication technologies (ICTs).

ADDITIONAL RESOURCES

FACT CHECK: The Electoral and Boundaries Commission’s Response

Why the Barbados Election Least Data Leak is Problematic – And How It Could Have Been Prevented

Comments on the National Identity Management System Act

Too Many Unanswered Questions: The Barbados National Digital Identification

Creating a good ID system presents risks and challenges, but there are common success factors

What is a digital identity ecosystem?

Understanding the risks of Digital IDs